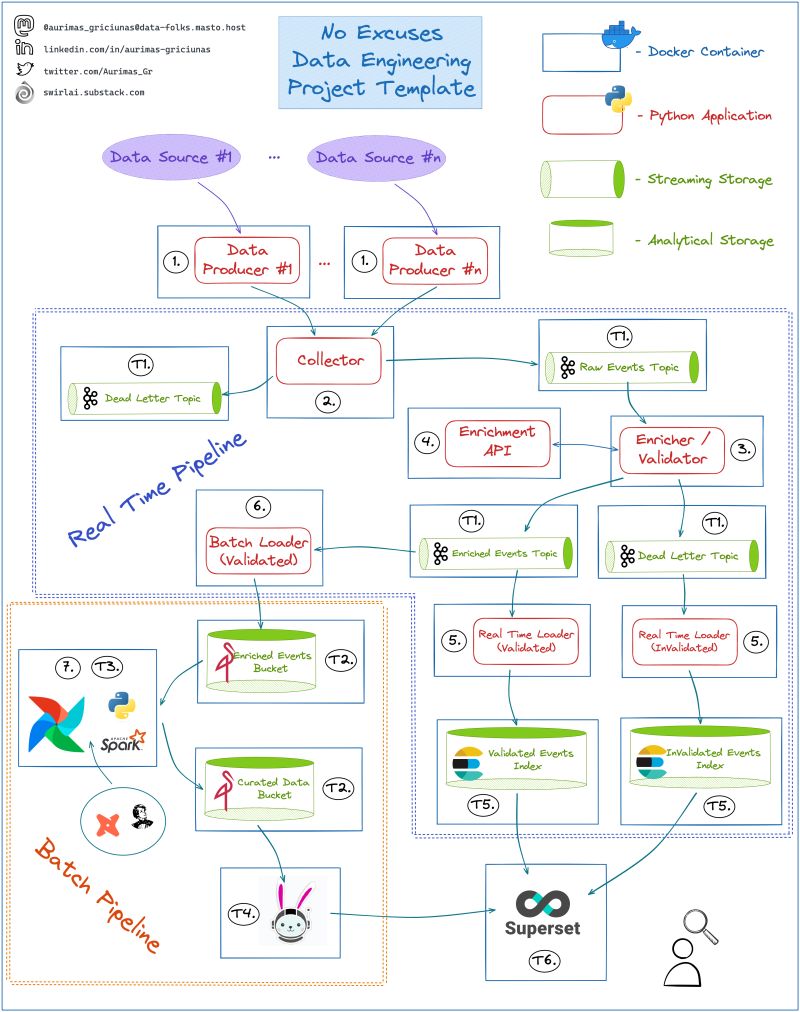

I will use it to explain some of the fundamentals that we are talking about and eventually bring them to life in a tutorial series. Will also extend the template with missing MLOps parts so tune in!

Recap:

- Data Producers - Python Applications that extract data from chosen Data Sources and push it to Collector via REST or gRPC API calls.

- Collector - REST or gRPC server written in Python that takes a payload (json or protobuf), validates top level field existence and correctness, adds additional metadata and pushes the data into either Raw Events Topic if the validation passes or a Dead Letter Queue if top level fields are invalid.

- Enricher/Validator - Python or Spark Application that validates schema of events in Raw Events Topic, does some optional data enrichment and pushes results into either Enriched Events Topic if the validation passes and enrichment was successful or a Dead Letter Queue if any of previous have failed.

- Enrichment API - API of any flavour implemented with Python that can be called for enrichment purposes by Enricher/Validator. This could be a Machine Learning Model deployed as an API as well.

- Real Time Loader - Python or Spark Application that reads data from Enriched Events and Enriched Events Dead Letter Topics and writes them in real time to ElasticSearch Indexes for Analysis and alerting.

- Batch Loader - Python or Spark Application that reads data from Enriched Events Topic, batches it in memory and writes to MinIO Object Storage.

- Scripts Scheduled via Airflow that read data from Enriched Events MinIO bucket, validates data quality, performs deduplication and any additional Enrichments. Here you also construct your Data Model to be later used for reporting purposes.

Stages

- T1. A single Kafka instance that will hold all of the Topics for the Project.

- T2. A single MinIO instance that will hold all of the Buckets for the Project.

- T3. Airflow instance that will allow you to schedule Python or Spark Batch jobs against data stored in MinIO.

- T4. Presto/Trino cluster that you mount on top of Curated Data in MinIO so that you can query it using Superset.

- T5. ElasticSearch instance to hold Real Time Data.

- T6. Superset Instance that you mount on top of Trino Querying Engine for Batch Analytics and Elasticsearch for Real Time Analytics.